The Agentic AI Advantage

Why Organisations That Deploy AI Agents Correctly Will Outperform—and What “Getting It Right” Means.

-

May 01, 2026

-

In FTI Consulting’s “2026 Private Equity AI Radar,” only 7% of respondents report enterprise-scale deployment, but 95% note that programmes in production meet or exceed original business cases.1

The term “AI” covers a broad range of tools, most commonly referring to generative AI. While generative AI requires prompts and input, utilising pattern recognition and single-step response, agentic AI has the ability to operate autonomously, proactively and with multi-step reasoning.2

A competitive gap is opening between organisations deploying true agentic AI and those that are not. The term “agentic AI” has been applied broadly and inconsistently across the market. Many deployments described as agentic are workflow automation under a different name. That means the gap in understanding is as consequential as the gap in deployment.3

The difference is not just in capability; it is also in outcome. Some organisations are using AI to execute work more efficiently. Others are using it to reduce how much work needs to be done in the first place. For boards, CFOs and executive teams, this is now a question of control and accountability, not just technology.

What the Gap Looks Like

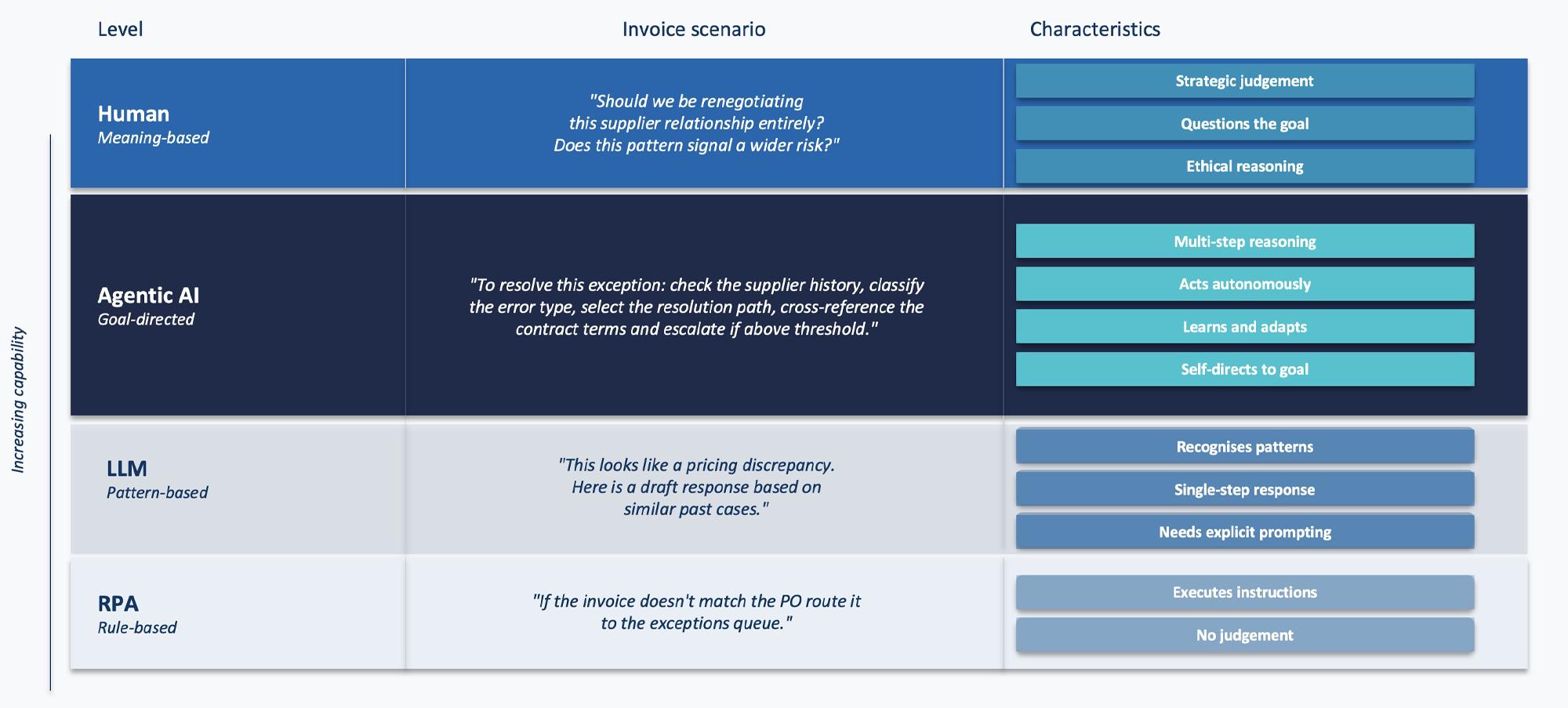

Four levels of capability define the current AI landscape: rule-based robotic process automation (RPA), pattern-based large language models (LLMs), goal-directed agentic AI and meaning-based human judgement. Understanding where your deployments sit is the starting point for everything that follows.

Figure 1: The AI Capability Ladder

Note: The capability ladder, from rule-based automation through to human judgement, is illustrated with an invoicing use case

The performance gap between a workflow tool and a genuine agent compounds over time at scale because the agent learns from every exception it handles, while the workflow tool continues to apply the same rules. Consider a finance team handling supplier invoice exceptions. A workflow tool detects a mismatch, routes it to a queue, sends a templated message and logs the exception. This workflow is fast and consistent but inflexible, and it learns nothing – it just consistently applies and reapplies the same rules and parameters.

A genuine AI agent reasons about the exception type, chooses a different resolution path for each, cross-references contract terms and either resolves autonomously or surfaces a structured recommendation. It learns. The pattern of exceptions across suppliers feeds back into procurement decisions. Contract terms that generate systematic disputes become visible.

The difference between a workflow system and an agentic one is not just speed but also outcome. A workflow system resolves more exceptions at speed, whilst an agentic system identifies why those exceptions occur, linking them to specific suppliers, contract terms or behaviours, and then reduces their frequency over time. One processes exceptions more efficiently. The other generates fewer exceptions to begin with.

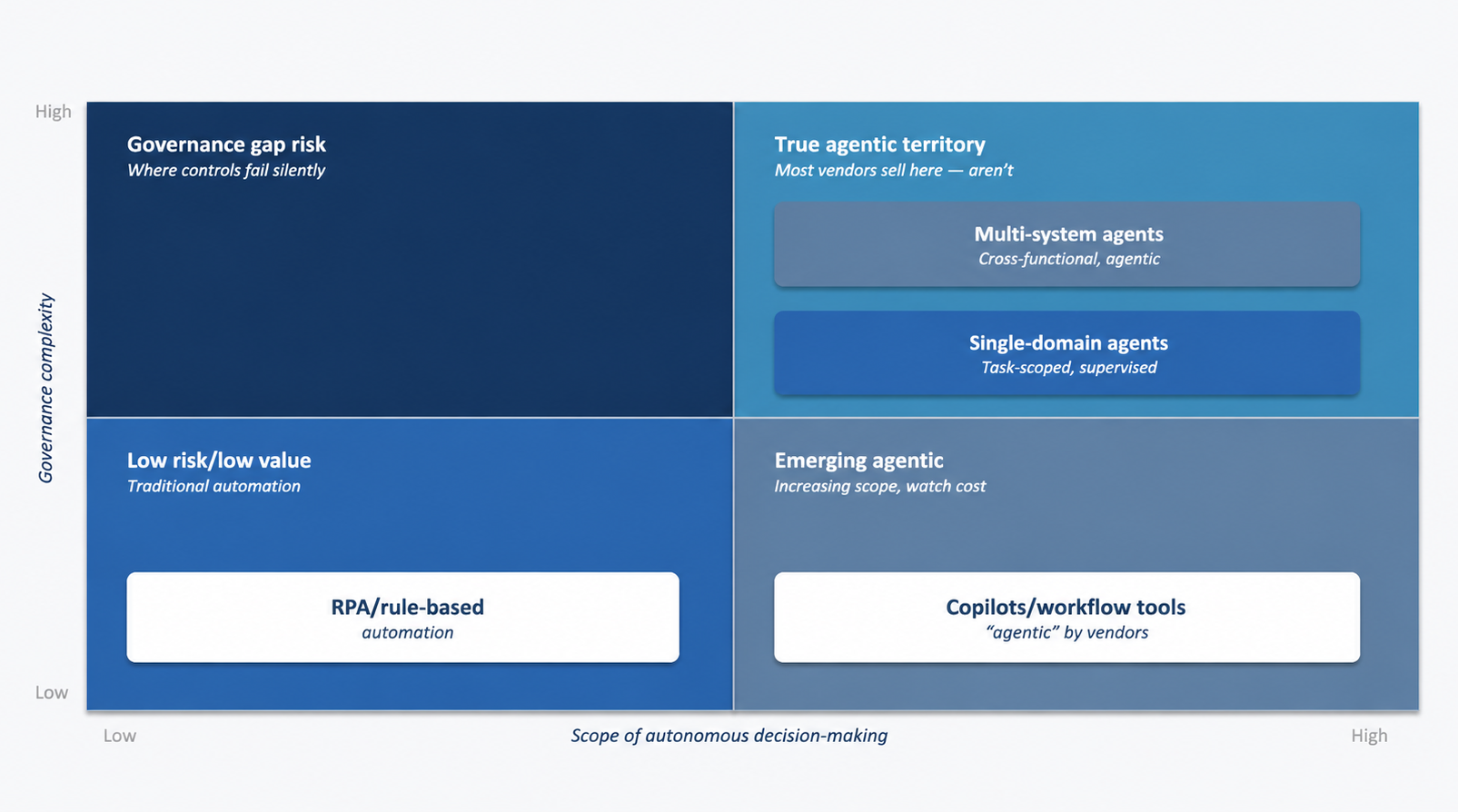

Figure 2: Autonomous Decision-Making and Governance Complexity

Agentic AI does not just improve how work is executed. It also reduces how much work needs to be done in the first place

Knowing which category your current systems sit in requires a structured assessment against a clear definition of agentic capability rather than alignment with a vendor’s labelling. That assessment is the prerequisite to everything that follows.

The starting point is not a technology audit. It is two operational questions.

- Does the system determine its own next steps, or does it act only when explicitly told what to do?

- If the system made a consequential decision today, could you reconstruct what it decided and why, without asking the vendor?

If the answer to the first is that the system operates with any degree of self-direction, and the answer to the second is uncertain, the organisation is already operating in agentic territory, whether or not it is labelled that way. That is why this conversation cannot be delegated to technology teams alone.

The Foundation the Advantage Runs On

Nobody describes financial controls as the cost of running a business. They are the infrastructure that makes scaling a business possible. The governance foundation for agentic AI is the same thing. It is not what you build after you have captured the advantage, but what makes capturing the advantage possible at scale.

Governance is not the cost of agentic AI. It is the condition for scaling it.

The evidence for this is already visible. Autonomous working sessions had nearly doubled in length in just three months, with AI agents already operating in finance, healthcare and cybersecurity in domains with significantly higher stakes than the software engineering workflows where most adoption began.4 The most striking finding is that as task complexity increases, human oversight decreases. On simple tasks such as editing a line of code, 87% of AI agent actions involve some form of human involvement. On complex, high-stakes tasks such as automated security analysis, that oversight falls to 67%. The more consequential the decision, the less oversight is happening in practice. This is not a feature of the technology. It is what happens when capable systems are deployed without the foundation to govern them.

This gap in human oversight must be addressed to ensure high-impact decisions are not taken without scrutiny. This need not be done through linear scaling of manual effort but by establishing a governance architecture that harnesses the capabilities of agentic AI within a targeted domain. Part of that gap is closed by the agent itself. Experienced users often auto-approve more sessions while also interrupting more turns, indicating that active monitoring is replacing step-by-step permissions.5 This points to a shift from humans being in the loop to being on the loop, enabling scaling beyond user bottlenecks.

Organisations that build the foundation correctly can navigate this shift without unnecessary operational risk. They scale with oversight intact because the governance architecture was designed to scale alongside the capability. In practice, that foundation comes down to four elements:

- Clear ownership of decision-making: Where are systems making decisions today? What authority do they have? Where are the boundaries? If this is not explicitly defined, it is implicitly undefined and the competitive advantage becomes a liability the moment something goes wrong.

- Cost visibility from the start: Agentic systems consume resources at the task level, at each reasoning step, at each system interaction, and at each parallel process. Costs that are unattributed at pilot scale can become material and unpredictable at production scale. Retrofitting cost controls after deployment is significantly harder than building them in.

- Defined oversight models: Where must a human be involved? Under what conditions can a system act autonomously? What triggers escalation? These are governance design decisions that determine whether the organisation can defend its deployments to an audit committee, a regulator or a board.

- End-to-end auditability: If a system makes a consequential decision, can you reconstruct what happened, including inputs, reasoning, actions and outcomes? Across jurisdictions, regulators are converging on the same core questions: What did the system decide? Why did it decide that? What data did it use? And who is accountable?6 Organisations that cannot answer these questions are exposed regardless of how well the system performs.

These four elements are the conditions under which agentic capability compounds rather than creates liability. The organisations that treat them as a launchpad rather than a constraint are the ones that will move faster, scale further and recover more quickly when things go wrong. The absence of these controls defers risk to a point where failures surface under scrutiny rather than in controlled conditions.

What Executive Teams Should Do Now

It’s important not to wait for perfect conditions. Instead, start by identifying where genuine agentic capability can create structural advantage and building the foundation to deploy it safely.

Identifying these opportunities starts with two questions: Where in the organisation are there high-volume, multistep processes that currently require significant human judgement to navigate? And where would the compounding effect of a system that learns from every decision create measurable value over time? Finance operations, procurement, risk monitoring, regulatory reporting and customer escalation management are the areas most organisations should examine first. Not because they are the easiest but because they are where the difference between a workflow tool and a genuine agent is largest and where its impact will be most visible over time.

Once these areas are identified, six actions determine whether deployment in them becomes a competitive advantage or a liability:

- Map current deployments against the capability ladder, not to what vendors claim: Where do your systems actually sit: rule-based, pattern-based or genuinely goal-directed?

- Define decision rights and authority levels: Where are systems acting autonomously today? What are they authorised to decide? Ambiguity here is where control failures can originate.

- Establish cost baselines and ownership: Token costs at scale are material and unpredictable Every agentic deployment needs a named owner for its cost envelope, not just its outputs.

- Define human-in-the-loop thresholds based on consequence and risk, not technical convenience: These are governance design decisions that must be explicit before deployment.

- Test auditability before you need it: If a system made a consequential error today, could you reconstruct what happened? Run that test now, not after a failure surfaces it under pressure.

- Build the operational layer that keeps the foundation functioning: Agentic systems require active monitoring of task completion rates, escalation frequency, token spend and output quality. This is what prevents a successful pilot from turning into a production liability and what maintains the live audit trail regulators will ask for.

These are the steps that determine whether your organisation is building toward a competitive advantage or accumulating the debt that will prevent it. If an AI system in your organisation made a consequential error tomorrow, could you reconstruct what it decided, why, and who was accountable?

Boards and executive teams do not need more agentic AI discussion; instead, they need clarity on where real autonomy exists, what control model it requires and where it can create a durable advantage.

The companies that pull ahead will be those who reduce how much non-value-adding work their organisation needs to do, because their systems are continuously improving the decisions that generate that work, rather than just those who simply automate more. They have a clear view of the current reality and what is required to scale AI in a way that strengthens long-term performance. At its core, this is an assessment of direction and exposure, clarifying whether the organisation is building a durable advantage or accumulating risks that may only become visible under pressure.

Footnotes:

1: “2026 Private Equity AI Radar,” FTI Consulting (17 March 2026)

2: Downie, Amanda and Finn, Teaganne, “Agentic AI vs. generative AI,” IBM

3: “Translating AI Investment into Real Outcomes,” FTI Consulting (7 October 2026)

4: “Measuring AI agent autonomy in practice,” Anthropic, M. McCain et al. (18 February 2026)

5: Ibid.

6: “Regulation (EU) 2024/1689 on Artificial Intelligence (AI Act),” European Parliament and Council (12 July 2024)

Published

May 01, 2026

Most Popular Insights

- Beyond Cost Metrics: Recognizing the True Value of Nuclear Energy

- Finally, Pundits Are Talking About Rising Consumer Loan Delinquencies

- A New Era of Medicaid Reform

- Turning Vision and Strategy Into Action: The Role of Operating Model Design

- The Hidden Risk for Data Centers That No One is Talking About