AI Agents: How to Capture Value While Maintaining Control

-

April 07, 2026

-

AI agents have rapidly become a focal point in business and technology discussions, despite the lack of a consistent definition. For this article, AI agents are software systems that can independently plan and execute multi-step tasks on behalf of a user, using judgment to decide what actions to take, which tools to use, and when to ask for human input. In practice, the term is used to describe both advanced systems capable of planning, tool use and autonomous action across enterprise environments, and, in some cases, existing automation repackaged with updated terminology.

These capabilities are no longer confined to enterprise software; consumer AI products now offer agent-like functionality to everyday users. As a result, employees are developing expectations and usage patterns before their organizations have frameworks in place. For leaders responsible for risk, governance and technology investment, this combination of ambiguity and accessibility presents a challenge that requires a thorough understanding of what agents are and are not, where they are emerging, where they create value and where they may introduce risk.

What AI Agents Are (And are Not)

“AI agent” is often used broadly, sometimes to describe actual tool-using systems and sometimes to re-label familiar capabilities. Processes become agentic when multi-step reasoning is brought into the equation, each decision has a consequence on the final output and the path through those decisions is not predetermined. In practical terms, AI assistants generate content in direct response to a user query; agents progress work by making decisions and taking multi-step actions.

Most organizations already recognize two adjacent categories:

- LLM-powered chat assistants and chatbots: These systems answer questions, draft text, summarize and help users think. Examples include consumer-style assistants (ChatGPT, Claude, Gemini) and embedded copilots inside productivity and enterprise tools (Microsoft Copilot in Microsoft 365; conversational assistants inside CRM and service platforms). They can be highly useful, but they typically do not execute work across systems by default, and they often rely on a user to decide the next step and carry it out.

- Workflow automation: These tools move work through predefined steps, usually within systems of record. Examples include ServiceNow workflows, Salesforce Flow, Microsoft Power Automate, UiPath RPA and common IT and finance processes such as ticket routing, user provisioning, invoice matching and approvals. They are reliable and auditable, but they can be brittle when real-world exceptions are frequent, or inputs are incomplete.

Agents introduce a third mode: goal-directed execution with bounded autonomy. They can interpret a goal, plan a sequence, use tools, verify outcomes and iterate options. The defining characteristic is not intelligence, but rather authorized autonomy: planning, tool use and verification within explicit boundaries.

A practical test for “is this actually an agent?” is to ask three questions:

- Can it decide the next action without a user prompting each step?

- Can it use tools that change state (create/update/submit), not just retrieve information?

- Can it verify results and adjust its plan when something fails?

If the answer to these is mostly “no,” it is usually an assistant or workflow automation, even if it is marketed as an agent.

Where Agents Show Up in Companies

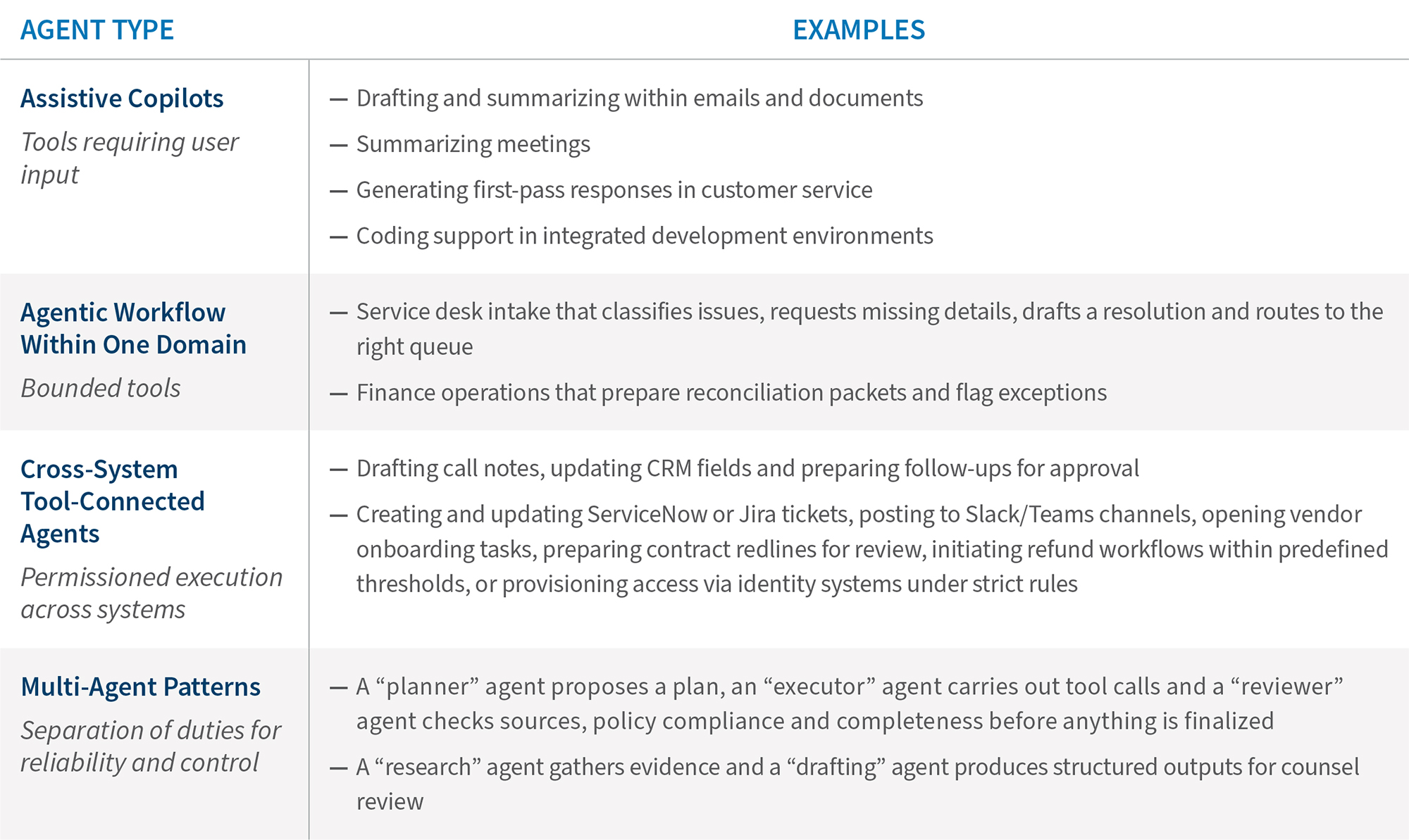

Agents typically follow a maturity ladder.1 The same organization may have all four types (listed below) at once, in different functions.

A practical observation for leaders: an inflection point is when agents become tool-connected. That is where the benefits become more tangible, and where permissions, auditability and accountability start to matter as much as model performance.

Risks Leadership Should Expect

Five risk areas are common in early deployments:

- Reliability risk: wrong answers, weak sourcing, chained vulnerabilities, overconfidence, and missing context – especially when outputs appear authoritative.

- Unintended action risk: tool misuse that updates the wrong record, triggers the wrong workflow, or sends the wrong message.

- Data handling risk: overly broad access, sensitive data exposure via connectors, prompts, or logs, plus privilege and retention complications.

- Security and abuse risk: prompt injection and tool exploitation, including malicious instructions embedded in emails, documents, or tickets. Agents can become an attack surface because they can act.

- Liability ambiguity: when an agent takes an action that produces a negative outcome, the chain of responsibility – from the user who set the objective, to the team that configured permissions, to the vendor whose model exercised judgment – is often unclear. That ambiguity is itself a risk.

As autonomy increases, risk shifts from model behavior alone to operational, security and governance exposure. That is why permissions, accountability and evidence need to be designed from the start.

What to Do Now, and What to Expect Next

Two moves can help organizations capture value while maintaining control. First, prioritize a small portfolio of use cases to prevent agent sprawl. Start where volume is high, downside is bounded and outcomes can be measured: fewer handoffs, fewer stuck items, faster completion, more consistent outputs. Avoid unmanaged proliferation of one-off agents that multiply access paths and create inconsistencies across functions. A focused portfolio is easier to govern, easier to measure and easier to learn from.

Second, put practical governance in place that matches the level of agent autonomy. This is not about slowing adoption. It is about clarifying decision rights and risk: who approves use cases, who grants tool access, who sets boundaries for autonomous action and who is accountable for outcomes. The goal is to enable adoption while avoiding ambiguity when something goes wrong. Organizations that wait for a perfect framework before starting will fall behind; organizations that start without any framework will create problems that are expensive to unwind.

Agents are not new in concept, but there has been a clear shift in how they surface inside organizations, moving from “assistive” copilots to embedded, tool-connected workflows inside core enterprise platforms. As more agents gain permissioned access to systems of record, leaders must question what models can generate and how the organization constrains and evidences what the system is allowed to do.

In practice, many organizations are converging on stronger controls for high-impact steps, especially where an agent can change state in a business system. That typically includes step-up authentication at the moment of action, transaction confirmations for material changes, threshold-based approvals and dual-control patterns for sensitive operations (for example, a second reviewer for payments, access provisioning, external communications, or regulator-facing outputs).

The direction is consistent even if mechanisms vary: tighter guardrails at decision points, clearer limitations on autonomous execution and a more explicit evidence trail as autonomy increases. Organizations that treat this as an operating model challenge, not just a model performance challenge, will be better positioned to capture value while maintaining defensible oversight.

Footnote:

1: Gartner press release, “Gartner Predicts 40% of Enterprise Apps Will Feature Task-Specific AI Agents by 2026, Up from Less Than 5% in 2025,” Gartner (Aug. 26, 2025), .

Related Insights

Published

April 07, 2026

Key Contacts

Key Contacts

Senior Managing Director

Senior Managing Director

Managing Director

Director